India is reportedly pushing for local hosting of Anthropic’s AI models amid cybersecurity and digital sovereignty concerns.

Apple reportedly faces a $250 million AI marketing settlement while planning multi-model AI options for future iOS updates.

Spotify has introduced a ‘Verified’ badge to help users distinguish human artists from AI generated content on the platform.

China has blocked Meta’s $2 billion Manus deal over AI tech transfer concerns, highlighting rising scrutiny on cross-border AI investments.

Donald Trump has hinted at a possible easing of his stance toward AI firm Anthropic, signaling potential policy shifts.

OpenAI introduces a policy blueprint aimed at preventing misuse of AI tools in generating harmful and illegal content.

Anthropic has appointed Amlan Mohanty to lead its India policy efforts, strengthening engagement with regulators and stakeholders.

Retail brands are prioritising first-party data strategies to improve targeting, build trust, and adapt to changing privacy regulations.

Gujarat High Court has restricted the use of AI tools in judicial work, citing concerns around accuracy and accountability.

The US government is drafting stricter AI guidelines as policymakers debate oversight standards with AI developer Anthropic amid growing concerns about advanced AI risks.

Meta’s Ray-Ban smart glasses face scrutiny after reports reveal human reviewers analyze recorded footage to train AI systems, raising privacy and consent concerns.

Overwhelmed by AI tools? Here’s a practical 2026 guide for beginner marketers on what AI tools to use, from writing and design to ads, CRM, analytics, and compliance.

AI is transforming marketing decision-making—but without governance, it can amplify bias, compliance risk, and brand damage. Here’s what leaders must prioritise in 2026.

OpenAI has hired an AI safety expert from Anthropic to oversee risk and governance, reflecting rising focus on responsible development of advanced AI systems.

Indian technology stocks fell after Anthropic launched a new AI risk tool, prompting investor concerns around compliance, transparency and future AI deployment costs.

IIM Lucknow has proposed an ethical framework to guide responsible use of AI in marketing, focusing on transparency, data privacy, fairness and accountability.

The European Union has launched a safety probe into Grok AI, examining whether X has met its obligations under the bloc’s digital platform risk compliance framework.

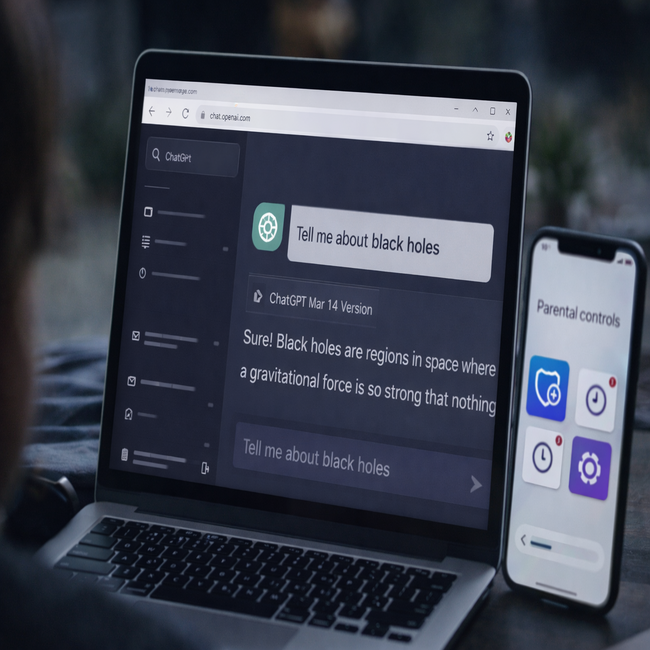

OpenAI introduces age prediction on ChatGPT to enhance teen safety, applying additional safeguards for users likely under 18 while allowing verification for adults.

Indonesia and Malaysia have restricted access to xAI’s Grok chatbot, citing concerns around content moderation, governance, and compliance with local regulations.

Italy has closed its probe into DeepSeek after reviewing concerns around AI hallucinations, reflecting Europe’s evolving approach to AI oversight.

Meta’s reported AI partnership with Manus AI faces Chinese regulatory scrutiny over potential technology export control concerns.

AI experts warn that safety and governance measures are falling behind rapid AI advancements, narrowing the window to manage long-term risks responsibly.

India has directed X to rein in Grok after AI-generated obscene content triggered regulatory scrutiny, highlighting growing concerns over AI governance.

Italian regulators have ordered Meta to suspend its WhatsApp AI chatbot, citing concerns over data processing, transparency and user consent under EU law.

Lyricist Javed Akhtar flags concerns over AI generated deepfake content, highlighting rising risks of digital impersonation and misuse of public figures.

OpenAI is hiring a Head of Preparedness with a compensation package reaching $555,000 as it deepens focus on AI safety and risk management.

AI-generated product descriptions are creating accuracy issues on e-commerce platforms, raising concerns around consumer trust, misleading claims and the need for stronger human oversight.

South Korea will implement a comprehensive AI law from January 2026, setting new rules for safety, transparency and governance of artificial intelligence systems.

India’s DPDP Act 2023 introduces strict consent, data retention and breach rules, forcing marketers to rethink how they collect, store and use consumer data.

Meta India, Aman Jain, Public Policy, Technology Regulation, Social Media Policy, AI Governance, Digital Economy, Internet Platforms, Leadership Appointment

Disney accuses Google of AI copyright infringement shortly after OpenAI’s one billion dollar deal, highlighting rising tensions over training data use.

India proposes mandatory royalties for AI training data, urging tech firms to compensate creators and ensure transparency in model development.

SAP outlines a unified approach to European AI and cloud sovereignty, focusing on data control, compliance and transparent enterprise adoption.

Microsoft will remove Copilot from WhatsApp next year after platform enforces stricter rules on third-party AI chatbots, affecting integrations globally.

Martech enters a survival phase in 2026 as AI, privacy rules and budget pressure reshape stacks. Essential tools rise while outdated platforms fade.

Uber faces collective legal action in the UK over allegations that its AI-powered pay and management systems lack transparency and violate worker data rights.

India is witnessing a surge of AI-generated content—from robot anchors to chatbot-written articles—raising urgent questions about misinformation, ethics, and public trust in digital media.

India’s MeitY plans a full-fledged AI Act modelled on the IT Act to regulate synthetic and AI-generated content, following new deepfake rules.

India announces stricter AI regulations to combat deepfakes, requiring platforms to label synthetic and manipulated content.

AI models trained on decades of ads are reproducing brand content, raising new challenges around copyright, transparency, and marketing ethics as the line between learning and imitation blurs.

Data clean rooms are emerging as a solution to India’s privacy-first marketing challenges, but success depends on data accuracy, governance, and leadership.

Cookies linger, but AI has already redefined marketing—driving personalization, measurement, and identity resolution in a privacy-first, post-cookie era.

YouTube begins U.S. trials of AI-powered age verification, aiming to enhance safety and comply with regulations without disrupting user experience.